Discover how Path Win Rate measures LLM preference across AI conversations. Learn why mention rate fails and how GEO decisions are formed during AI comparisons.

The article that follows explains Path Win Rate and its role in measuring LLM visibility. Rather than counting appearances in AI-generated answers, this metric assesses whether a brand consistently appears before competitors across realistic decision paths.

This distinction is important, and here is why. Although we all know that LLM preferences are not fixed, it is nonetheless necessary to highlight that they develop over time, as conversations progress: options are compared, constraints increase, and validation questions arise. What do you see at the end of this path? A group of brands that are not just visible but are the chosen few.

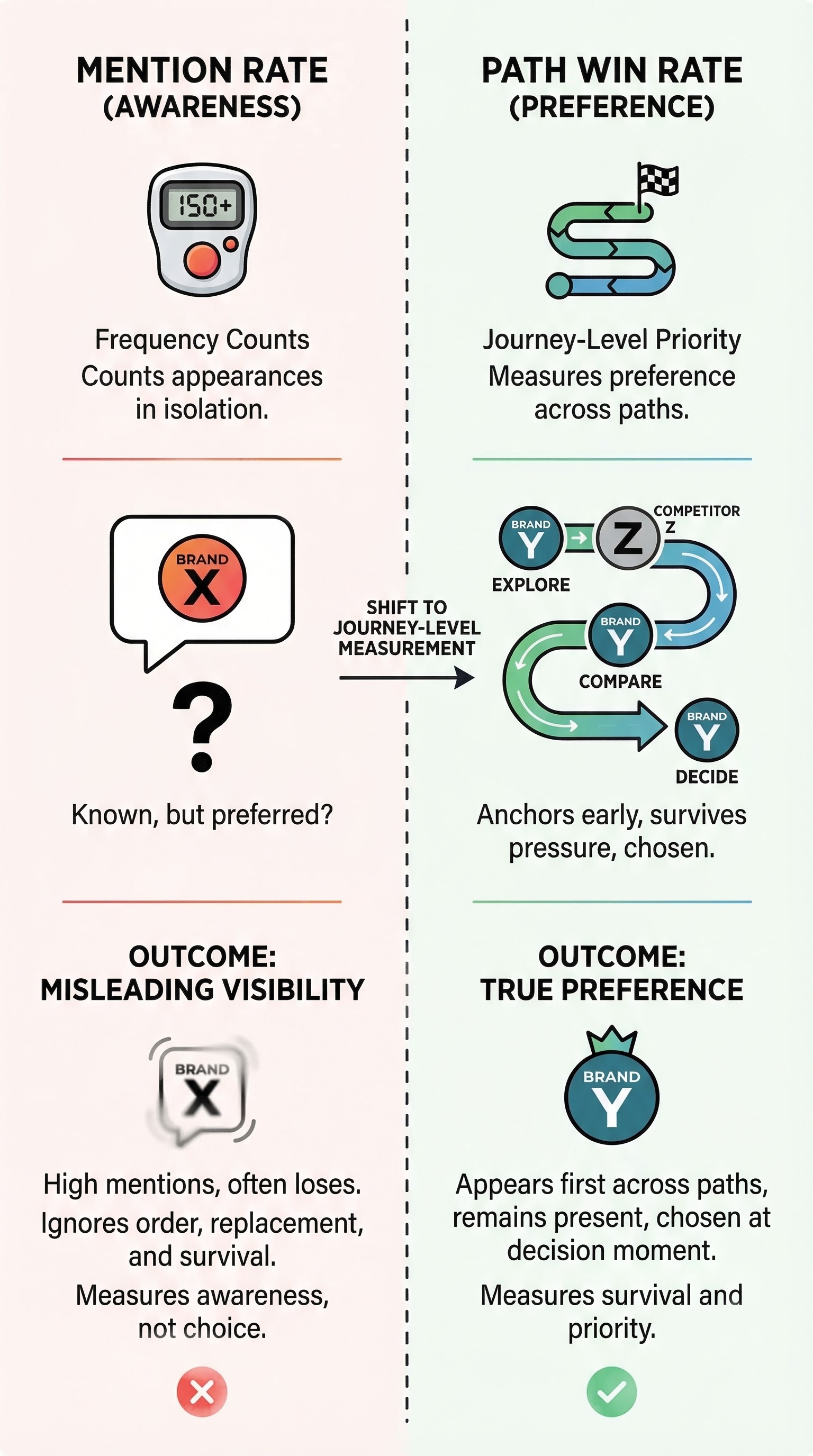

Metrics such as mention rate, however, are completely useless in tracing the chosen few. They can only inform you that the brand appears at some unknown point along the path. And it precisely explains why many common GEO efforts appear successful yet fail to yield meaningful outcomes.

Below, we explain why mention rate is a misleading metric and how Path Win Rate functions instead. You will learn what influences wins and losses across conversation paths and how to apply these insights to improve content, data, and claims. And don’t miss our Complete GEO Framework for more insights on LLM visibility measurement.

Review your SEO dashboard, now updated with GEO metrics. You will see AI citations indicating how often your domain appears in AI search results. However, it is important to note:

In LLM visibility measurement, the mention rate only counts how often a brand appears in generated answers. It indicates that the model recognizes your brand but does not reveal whether it would choose or recommend your brand when a decision is required.

This distinction, however, is crucial because LLMs do not form preferences at the first mention. Preference, on the contrary, develops throughout the conversation path. As discussions progress, the following obvious things usually take place: options are compared, constraints increase, and risks emerge. What does the model do? It re-evaluates brands at each stage so that only some withstand this scrutiny.

It’s quite common for a brand to have a high mention rate yet lose consistently. It happens because the brand appears early, is listed among several options, or is referenced neutrally, but then disappears when the model is prompted to compare, validate, or make a decision. What externally appears as visibility is nothing more than the reflection of churn from an internal standpoint.

And it’s not the only point where the mention rate fails. Another downside of this approach to LLM visibility measurement is that it overlooks order and replacement. What we mean here is that appearing after stronger competitors may seem nice, but it rarely results in selection. Even if your brand appears before such competitors, but it is repeatedly replaced when constraints are applied, it simply means that the model does not prefer it, regardless of earlier mentions. So, let’s define what real preference in LLMs is.

In simple terms, it is something expressed through survival and priority, not frequency. Seen this way, it shows up when a brand:

With all these nuances in mind, we should once again proclaim that mention rate is the most misleading metric for GEO. Something that measures awareness in isolation cannot reveal true preference. It can only be revealed through journey-level behavior, and that’s exactly why Path Win Rate enters the game.

Let’s define what the Path Win Rate is:

Rather than just counting mentions, Path Win Rate sticks to a more detailed exploration. It shows whether the LLM first anchors on your brand when constraints are introduced, and across which decision stages that occurs. So what does it mean to win in this context?

The answer is pretty obvious. A path is considered a win when your brand is introduced earlier than the primary competitor in the same decision journey, including stages such as exploration, narrowing, comparison, validation, and decision.

If the competitor appears first or replaces your brand entirely, that path is a loss.

To reveal the brand’s true LLM preference, Path Win Rate evaluates many paths, identifying which brand the model gravitates toward as constraints increase. A simple mention rate cannot do that, and the infographic below illustrates three aspects of why exactly it fails and how these issues are solved with Path Win Rate:

So, let’s draw an interim conclusion. If being first across LLM paths reflects fit, confidence, and alignment with the model's understanding of the problem, then Path Win Rate is a metric that measures these factors. Next, we reveal why some brands consistently win paths while others drop out of them.

Below, we explore the top three factors that positively impact path wins. But before going any further, here is an important insight: path wins are intentional. From this, the following truth follows: path wins occur ONLY when the model can confidently anchor a brand to the appropriate category, resolve constraints in its favor, and rely on proof assets when uncertainty arises. It never happens “just because.” So, you always have to deal with these three factors that impact LLMs the most:

Combine these elements, and your Path Win Rate will skyrocket. With enough information about your brand (aligned with the three factors that drive path wins), models can confidently introduce it early due to clear categorization, retain it as constraints are met, and continue to trust it at the decision point. So, this is how preferences form within LLM conversations and why path wins are earned rather than simply counted. Next, we should examine loss paths.

Path Win Rate becomes especially valuable when the focus shifts from how often losses occur to identifying where losses actually happen. And just like path wins, loss paths are rarely random.

Usually, the first drop takes place during constraint tightening. It indicates missing or unclear constraint data. Even if a brand appears early, factors such as price, geography, scale, compatibility, or availability may affect the model to the point that it no longer confirms a fit. And the most dangerous aspect is that options are removed without criticism; your brand is simply no longer mentioned.

Additional losses occur during comparison stages. When the model evaluates options side by side, it must articulate differences. If a brand lacks explicit tradeoffs, measurable advantages, or clear winning criteria, the model defaults to other options that are easier to compare. These losses often appear as replacement rather than outright rejection.

And the most significant losses occur during validation. At this stage, concerns such as risks, downsides, hidden costs, or edge cases arise. Brands that survive earlier stages may still drop out if their proof is weak or narratives are inconsistent.

Of course, some brands disappear just before the decision point. Although they remain present throughout the journey, when the model is prompted to choose or determine next steps, another option is selected. This typically indicates a lack of decision signals: pricing clarity, guarantees, confidence anchors, etc. Without this additional information, the model cannot commit.

Diagnosing loss paths involves identifying the exact stage at which a brand disappears and tracing it back to the model's unmet expectations at that moment. Without this visibility, you risk addressing the wrong issues, such as adding more content when the underlying problem is structure, proof, or clarity. Follow our Conversation-First GEO Measurement Guide to discover other key components to measure LLM visibility where decisions are made.

So, it seems quite obvious that the next step is to determine what is effective and how to scale those strategies.

First of all, you need to consider path wins as evidence rather than just outcomes. When a brand consistently wins paths, it’s because of specific reasons. And conversation simulation reveals them. It shows which attributes enable early inclusion, which claims withstand comparison, and which proof signals remain credible during validation. Translating these wins into a plan involves converting journey behavior into actionable changes.

The first thing you need to focus on is content structure. Win paths often reveal specific explanations, comparisons, or clarifications that help the model progress confidently. When you carefully analyze these signals, you can discover gaps in category pages, FAQs, comparison sections, and decision-focused content. Close these gaps. That’s it. But keep in mind that your objective is not to produce more content, but to provide content that addresses the model's key questions under pressure.

The second thing that you should pay attention to is data clarity. As we’ve already mentioned, path wins often depend on satisfying explicit constraints. If price ranges, compatibility, geography, availability, limits, guarantees, and other signals are implicit, scattered, or inconsistent, they become unreliable. Consequently, the model won’t recommend your brand. With win paths, however, you can discover the data points to elevate, standardize, or clarify so that the model can understand your brand.

The third thing to focus on is claims and positioning. With path wins identified, you can easily reveal which claims the model trusts and which fail under scrutiny. LLMs see strong claims as concrete, specific, and verifiable, while considering weak claims as vague, absolute, or unsupported. Understanding this distinction is essential to help your brand keep afloat during validation. If you get it wrong, your brand will sink, being replaced by safer alternative options.

What’s also essential is alignment. If your content, data, and claims consistently reinforce the same narrative at every stage of the journey, wins become repeatable. Otherwise, wins are isolated and disappear as conditions change. For further information on how to improve your content, data, and claims, refer to the following guides:

In the realm where being mentioned is common, but being chosen is rare, Path Win Rate deserves a special place. Especially if you consider the fact that preference is not established immediately in LLM conversations, but it develops gradually as options are compared, constraints are introduced, and risks are evaluated.

Brands that win paths do so by consistently surviving this scrutiny across realistic journeys. Those who lose typically do not fail conspicuously. The only reason they fail is that the model is no longer confident enough to trust these brands and, as a result, justify their inclusion.

This is why Path Win Rate is a decision-grade GEO metric that shifts measurement from surface-level exposure to competitive outcomes. It identifies which brand the model first anchors to, which brands are replaced, and which remain credible at the decision point.

More importantly, Path Win Rate makes your wins actionable. You can use this metric to analyze successful paths and identify the content structures, data signals, and claims that consistently perform well, intentionally scaling them. This approach replaces GEO guesswork with targeted reinforcement.

And if your goal for GEO is to influence revenue rather than just awareness, Path Win Rate is the metric that bridges this gap. Track path wins automatically with Genixly to identify when LLMs actually prefer your brand, rather than simply mention it. Contact us for more information.

Our blog offers valuable information on financial management, industry trends, and how to make the most of our platform.